Machine learning in fisheries acoustics

Active acoustic data collected with scientific echosounders from acoustic surveys have become essential for stock assessment in fisheries resources management. Over the past three decades, a wide suite of physics-based acoustic scattering models were developed to allow translating acoustic observations to biological quantities, such as biomass and abundance, for different marine organisms. In parallel, quantitative scientific echosounders have progressed from specialized equipment to one of the standard instruments on fisheries survey vessels.

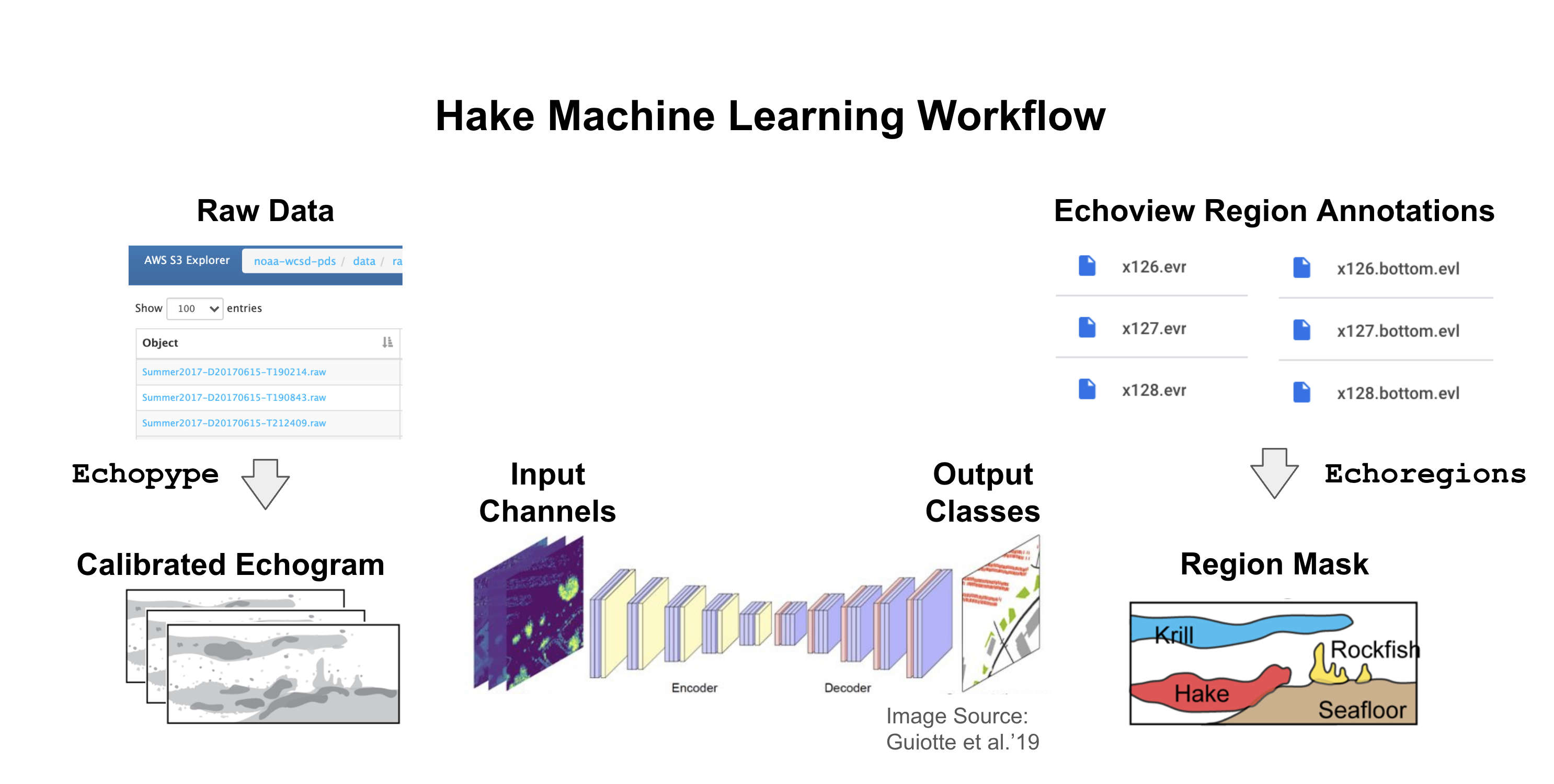

To take full advantage of these large and complex new datasets, in this project we aim to combine the development of machine learning methodology with a cloud-based workflow to accelerate the extraction of biological information from fisheries acoustic data. Our group has developed and used Echopype, a Raw Sonar Backscatter data parsing Python package, and Echoregions, an Echoview annotation data parsing Python package. Transferring data from Echoview and proprietary echosounder formats to Python data products enables seamless integration with a rich ecosystem of scientific computing tools developed by a vast community of open-source contributors, thus allowing us to use our data to train deep learning models to predict regions of interest in echograms.

This project is in close collaboration with the Fisheries Engineering and Acoustics Technology (FEAT) team at the NOAA Fisheries Northwest Fisheries science center (NWFSC) and uses data collected in the past 20 years off the west coast of the U.S. from the Joint U.S.-Canada Integrated Ecosystem and Pacific Hake Acoustic-Trawl Survey (aka the “Hake survey”).

Funding agency: NOAA Fisheries